Protobuf Goes Scala-First

Protobuf is commonly associated with code generation. However, in large projects with tens of thousands of message definitions, this approach generates an overwhelming amount of code. This, in turn, can create a slow development experience. In this post, I’ll share my personal experience working with Protobuf in a large Scala codebase and my journey in search of a solution to this problem.

Protobuf in Scala

Let me provide some background on what Protobuf is and how we typically use it in Scala.

Protobuf, a.k.a. Protocol Buffers, is a language-neutral, platform-neutral, extensible mechanism for serializing structured data. It is commonly used as a storage or communication protocol. The gRPC framework is built on top of Protobuf to provide an RPC interface between systems.

Typically, you define a schema in a .proto file, which is then used to generate code for the messages, together with encoders and decoders. Here’s an example of a message definition:

message User {

string id = 1;

string name = 2;

int32 age = 3;

repeated string tags = 4;

}Scala has an excellent library for Protobuf called ScalaPB. It provides an sbt plugin that generates Scala code from a Protobuf schema, with various options to customize the generated code. It also supports gRPC by wrapping grpc-java with various effects libraries (Future, ZIO, fs2). Its ease of use, reliability, and speed have made it the de facto standard for Protobuf in Scala.

Let’s take a look at a message definition straight from my codebase.

message ToppingSnapshot {

string id = 1;

int32 data_id = 2;

repeated ToppingSubOption sub_options = 3;

int32 grade = 4;

}When running ScalaPB code generation, it will generate the following Scala code (I omitted the body of the class for brevity):

final case class ToppingSnapshot(

id: _root_.scala.Predef.String,

dataId: _root_.scala.Int,

subOptions: _root_.scala.Seq[com.example.protobuf.ToppingSubOption],

grade: _root_.scala.Int

) extends scalapb.GeneratedMessageThis is nice, but not the kind of Scala types we want for writing domain logic. Instead, our domain entity looks like this:

case class ToppingSnapshot(

id: ToppingId,

dataId: ToppingDataId,

subOptions: List[ToppingSubOption],

grade: ToppingGrade

)As you can see, we use newtypes and refined types to define our domain types. This allows us to write more type-safe code and enforce business rules at the type level. We also don’t use Seq for collections but rather a concrete collection type that fits the usage pattern.

It is not possible to have ScalaPB generate the exact Scala code we want, so we resort to a data transformation library called Chimney to convert back and forth between the ScalaPB generated types and our domain types.

Chimney provides nice transformInto and transformIntoPartial methods to convert between two types. transformInto is a total conversion, while transformIntoPartial is a conversion that can potentially fail. When both types have the same “shape”, no boilerplate is needed.

// encoding

val protoSnapshot = snapshot.transformInto[protobuf.ToppingSnapshot]

// decoding

val snapshot = protoSnapshot.transformIntoPartial[domain.ToppingSnapshot]However, we need additional boilerplate when types have different shapes. An example is Protobuf’s oneof types, which usually fit into this category because the generated code has a different structure than the typical enum or sealed trait in Scala (it uses a nested message that matches how oneof types are encoded in Protobuf). So we end up having to write custom transformers like these:

implicit val encoder: Transformer[entities.Owner, protobuf.Owner] =

v => protobuf.Owner(v.transformInto[protobuf.Owner.Value])

implicit val encoder2: Transformer[Option[Int], protobuf.PurchaseMethod] = {

case Some(value) =>

protobuf.PurchaseMethod.PriceIndex(

value.transformInto[protobuf.PurchaseMethod.PriceIndex.Value]

)

case None =>

protobuf.PurchaseMethod.Free(protobuf.Empty())

}The Problem(s)

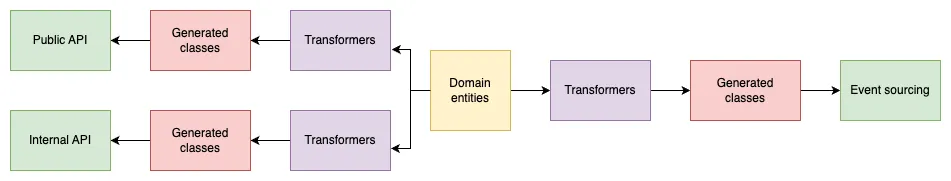

My team is developing a game server for a mobile game. We use Protobuf for a lot of things. We have two sets of APIs that use gRPC: one for the frontend (the mobile app) and one for our internal services (e.g. a backoffice). For both of these, Protobuf is used to provide a clear contract between the client and the server.

On top of that, our server uses an event sourcing architecture. We use Protobuf to serialize both journals (events) and snapshots into our database. In this case, using Protobuf helps us maintain the backward compatibility of our data since the schema is documented and changes have to be made explicitly.

All this means that when we add a new feature and need a new domain entity, we need to:

- Add the domain entity in our Scala code

- Add the Protobuf message definition in up to three different .proto files (the three protocols look similar but have subtle differences)

- Add custom Chimney transformers when needed, up to three times (once for each generated type)

As you can imagine, this is quite tedious! We constantly add new features, and doing this three times for every entity has become painful and error-prone. In particular, custom transformers are sometimes tricky and error messages can be difficult to understand for large, deeply nested objects.

Another issue stems from the size of the project. Our game server has been in production for over 5 years while receiving regular updates. When we released the game in 2021, we started with:

- 27 services

- 253 rpcs

- 980 messages

Now, as we celebrate the game’s 5th anniversary, we have:

- 94 services

- 1,312 rpcs

- 5,695 messages

As a result, we now see this when compiling a module containing one of the .proto schemas:

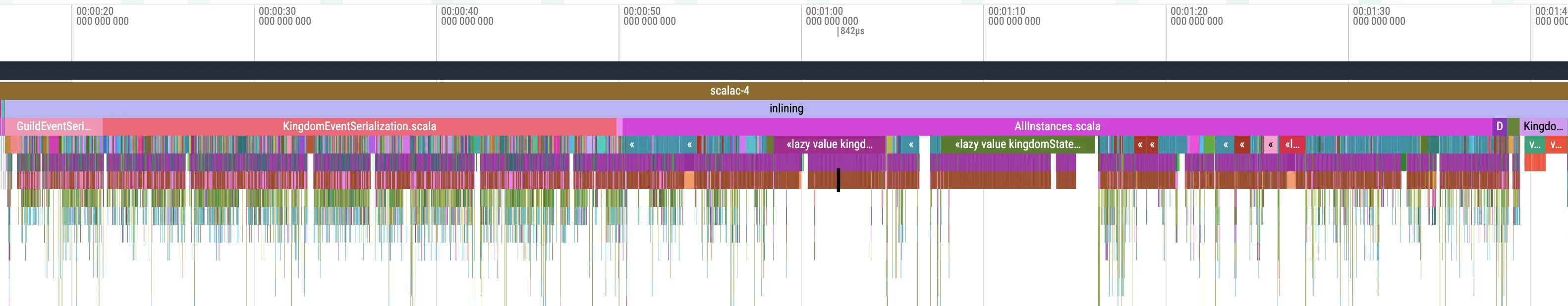

> [info] compiling 4626 Scala sources to /home/.../classes ...That’s a lot of generated code to compile, and it takes significant time. That’s not all: the module containing the Chimney transformers is even slower to compile, spending a lot of time in the inlining phase due to heavy macro usage.

We’ve also seen compile time increase significantly when upgrading Chimney, which forced us to stick with an older version. Not ideal!

Another issue affecting us is Metals completions being slow on large projects. The more code we generate, the slower the completions are. Code navigation seems to be affected as well.

As a combination of those issues, our development experience is getting worse as time goes on.

Disclaimer

Note that I don’t blame the maintainers of Chimney or Metals for these issues. They are doing a great job and I am thankful for their work. These issues are triggered by the large size of the codebase and are difficult to fix without good reproducers, which we can’t provide due to the proprietary nature of the project.

Alternatives

So, what can we do about this?

We’ve considered contributing to Chimney, Metals, or the compiler to improve performance, but these are complex changes demanding significant effort, and that would only address part of the problem. We’ve also considered creating our own sbt plugin to generate “clean” domain entities from .proto files, but this would require polluting our protocol with a lot of annotations (to specify the expected types everywhere): unacceptable since the protocol is shared with developers who don’t use Scala (our frontend team, for example).

What if… we generated our .proto files from domain entities instead of the other way around?

At first glance, this approach feels almost heretical: the entire Protobuf ecosystem is designed around writing your .proto files first and generating code for your target languages afterward. This pattern is deeply ingrained not just for technical reasons, but also for the clarity and interoperability it provides, especially when working with services across different stacks or teams. One immediate concern is that any change to the code could trigger changes in the schema and potentially break backward compatibility, which is particularly critical for the persistence protocol.

But when you think about it, you can still commit .proto files to your repository so that all changes are tracked and reviewed. Just because the protocol is modified manually doesn’t guarantee backward compatibility. In fact, we broke backward compatibility several times in the past by mistake, and we have tools in place to automatically detect that. The same process works whether the protocol is generated automatically or manually. An important additional step is to have the CI verify that the protocol matches the Scala code (in case you forget to run the protocol generation).

With this idea in mind, I started a proof of concept.

A few months later…

Introducing Proteus

The experiment proved successful, so I made it open source. Proteus is a library that allows you to generate a Protobuf codec from a Scala type. You can then use that codec to encode and decode messages, and also to render the corresponding Protobuf schema. Let’s see an example:

given ProtobufDeriver = ProtobufDeriver

val codec = ProtobufCodec.derived[ToppingSnapshot]

val snapshot = ???

val encoded = codec.encode(snapshot)

val decoded = codec.decode(encoded)

println(codec.render())

// syntax = "proto3";

//

// message ToppingSnapshot {

// string id = 1;

// int32 data_id = 2;

// repeated ToppingSubOption sub_options = 3;

// int32 grade = 4;

// }Because Protobuf is commonly used with gRPC, Proteus also provides a way to declaratively define gRPC services using those codecs, a bit like Tapir does for HTTP. You can then render the whole service as a .proto file.

given ProtobufDeriver = ProtobufDeriver

case class HelloRequest(name: String) derives ProtobufCodec

case class HelloReply(message: String) derives ProtobufCodec

val sayHelloRpc = Rpc.unary[HelloRequest, HelloReply]("SayHello")

val greeterService = Service("examples", "Greeter").rpc(sayHelloRpc)

println(greeterService.render(Nil))

// syntax = "proto3";

//

// package examples;

//

// service Greeter {

// rpc SayHello (HelloRequest) returns (HelloReply) {}

// }

//

// message HelloRequest {

// string name = 1;

// }

//

// message HelloReply {

// string message = 1;

// }You can then use this service definition to implement a gRPC server…

val service =

ServerService(using DirectServerBackend)

// provide rpc implementation

.rpc(sayHelloRpc, req => HelloReply(s"Hello, ${req.name}!"))

.build(greeterService)

ServerBuilder.forPort(8080).addService(service).build().start()… and a client!

val backend =

DirectClientBackend(

ManagedChannelBuilder.forAddress("localhost", 8080).usePlaintext().build()

)

val sayHelloClient = backend.client(sayHelloRpc, greeterService)

sayHelloClient(HelloRequest("Pierre"))

// HelloReply("Hello, Pierre!")This example uses the “direct” backend, which is the simplest one, but there are backends for Future, ZIO and Cats Effect (only the latter two support streaming). Anything based on grpc-java can be added easily.

Under The Hood

Proteus is built on top of zio-blocks-schema, a library that provides a schema representation of any Scala type and lets you do cool things with it. Note that despite its name, the library is extremely lightweight and does not have any external dependencies (it does not depend on ZIO).

The one feature that we rely on is that once you have a Schema for a type, you can derive a typeclass instance for it as long as you provide a Deriver for it.

trait TC[A]

val schema: Schema[Foo] = Schema.derived[Foo]

val deriver: Deriver[TC] = ???

// generate a TC instance from a schema and a deriver

val instance: TC[Foo] = schema.derive(deriver)

// in our first example,

val codec = ProtobufCodec.derived[ToppingSnapshot]

// is actually doing this under the hood:

val codec = Schema.derived[ToppingSnapshot].derive(ProtobufDeriver)The Schema derivation is extremely fast and doing it on our thousands of entities did not affect compilation time. For that I must thank @plokhotnyuk who worked tirelessly to make the macros fast and efficient, as well as fixing absolutely every issue I reported.

Most of the challenge was about implementing the Deriver for Protobuf with the help of the spec and a good look at the code generated by ScalaPB. One of the trickiest parts was getting good runtime performance. In fact, the code generated by ScalaPB is equivalent to having hand-written encoders and decoders for each type, so it is as fast as it can be. My first version of Proteus was… 35x slower!

Indeed, generic code introduces many indirections and boxing/unboxing compared to a hand-written implementation per type. But after multiple rounds of profiling and optimizations (in particular for caching sizes), I managed to get it down to a reasonable level. In my benchmarks, Proteus is about 1–2x slower than ScalaPB with Chimney, which is still extremely fast, and the difference gets smaller for larger messages. On the other hand, Proteus allocates much less memory than ScalaPB with Chimney. To give a comparison, Proteus is about 1.5-2x faster than libraries like uPickle and Borer.

Since I wrote this, we deployed it to production and it is actually using a little less CPU than our original implementation with ScalaPB and Chimney. The decrease in allocations is consistent with the benchmarks.

Schema Customization & Evolution

There might be cases where you want the Protobuf schema to be different from the domain entity. In that case you always have the option to create a dedicated type as long as you provide a transformation between the two.

val codec: ProtobufCodec[DomainEntity] =

ProtobufCodec

.derived[ProtoEntity]

.transform[DomainEntity](proto => ???, domain => ???)Of course, we want to avoid that as much as possible, so Proteus provides a simpler mechanism for “common” customizations, such as renaming types or fields, adding prefixes/suffixes to enum values, excluding fields, etc.

val deriver =

ProtobufDeriver

// make all enum values prefixed with the enum name

.enable(DerivationFlag.AutoPrefixEnums)

// rename `Person` to `User`

.modifier[Person](rename("User"))

// exclude the `age` field

.modifier[Person]("age", excluded)

// add a comment to the `name` field

.modifier[Person]("name", comment("The name of the person"))Another common case is removing a field from a domain entity. That will cause the field to be removed from the Protobuf schema, which will affect the ids of other fields, breaking backward compatibility. To solve this, Proteus provides a way to mark a field as reserved so it will be skipped in the schema. You can also force a field to use a specific id.

val deriver =

ProtobufDeriver

// reserve ids 1 and 3 so that they won't be assigned

.modifier[Person](reserved(1, 3))

// force `name` to use id 4

.modifier[Person]("name", reserved(4))Of course, you can always use transform to achieve the same result or if there are more complex transformations needed.

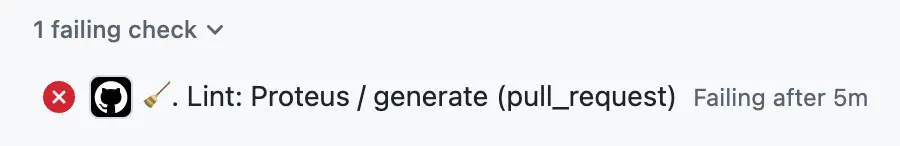

I mentioned it earlier but I will reiterate: if you care about backward compatibility, it is essential that you push the .proto files to git and make the CI verify that the protocol matches the Scala code by calling the schema generation and checking for differences. That way you ensure that all schema changes are intentional and reviewed, just like when you modified the schema manually.

In our case, the CI will fail like this if the schema is not up to date:

A Surprise Benefit

Migrating our large project was not an easy task. While we could tolerate a few changes in the API schemas, the persistence protocol had to be fully compatible, which required quite a bit of effort. But I discovered a benefit I hadn’t expected: consistency!

While migrating the schema, I realized that it was highly inconsistent. For the same kind of Scala code, we had multiple variations in the way we wrote the Protobuf schema: different naming patterns, inconsistent use of nesting, incorrect or missing field ids, etc. I found a whole range of mistakes that are solved by automation. And even though it made the migration more difficult because I had to preserve some of these inconsistencies, the Scala-first approach has proven much nicer for all new code and projects. Not only is it nearly seamless to generate the Protobuf schema, but that schema is now consistent and free of mistakes.

Conclusion

I hope this little story was interesting. Should you use Proteus? Probably not, unless you have similar pain points. ScalaPB does a great job in most cases, but it’s always good to have different options.

I think the main takeaway is that there is no absolute “right” way to do things. Just because a practice is common doesn’t mean it’s the best or the only correct one. You should always consider the trade-offs and choose (or sometimes, build!) the best tool for the job.

If you are interested, the Proteus repo has nice documentation and examples.